|

Getting your Trinity Audio player ready...

|

By Juliet Akoth

As AI-enabled tools enter the unpaid care economy, caregivers are raising questions about data use, accountability, and algorithmic risk.

At nine o’clock, Rosemary Kabui’s mother wakes into a day that has been rehearsed like a script. Grooming first. A piece of fruit, then a slow walk before the sun turns sharp. Later, the longest task: breakfast.

“You can’t just leave food for her,” Kabui says. “When you’re a caregiver, it’s almost like being someone’s shadow.”

The meal can take 40 minutes on a good day, an hour on a bad one. Her mother may forget to chew; she needs prompts, patience, and a calm face that does not betray urgency. Kabui, a registered dietitian, learnt early that structure isn’t a preference in dementia care; it is a kind of medicine.

“As much as their short-term memory is fading, the more they have routines, the less anxious they become,” she notes.

Across Kenya, dementia care is quietly becoming a new frontier where artificial intelligence meets gendered labour. Digital health tools, some with AI-enabled features are beginning to enter routines like Kabui’s, even as the hands-on labour that characterises unpaid care work remains unchanged.

The tools like chatbots, symptom checkers, and digital training platforms promise information and faster answers. But as these systems scale, they risk hardening an old reality: women absorb the emotional and physical work of caregiving while technology absorbs the information produced by that labour.

It raises the question: who benefits from the knowledge and data gathered from caregivers, especially when it is turned into a product?

Misunderstood Condition

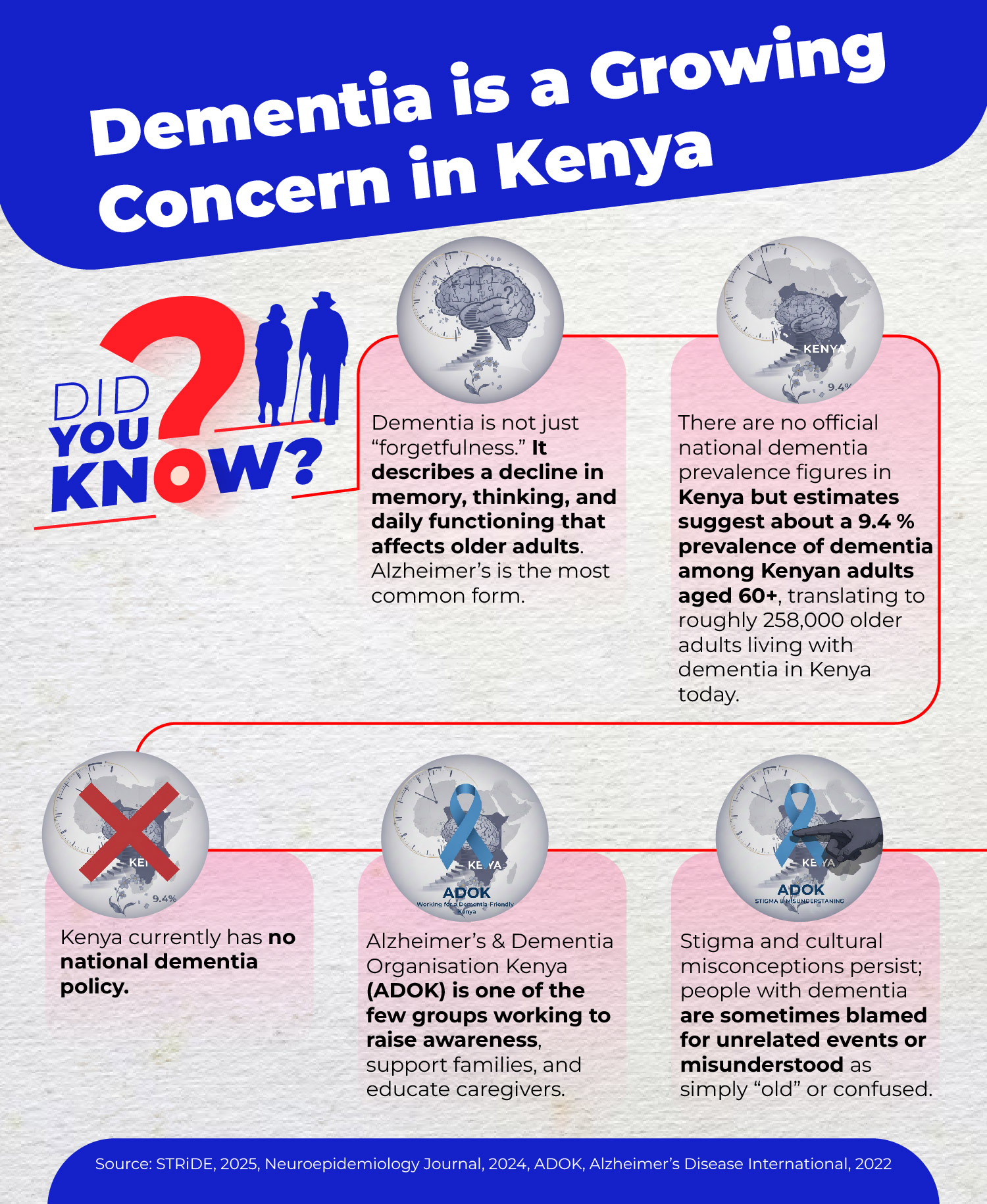

In Kenya, early signs of dementia, such as forgetting names or getting lost, are often dismissed as “just old age”, according to a 2025 article from Aga Khan University’s Brain and Mind Institute.

The onset of dementia was first noticed by Kabui in 2019. However, the diagnosis was not confirmed by a neurologist until two years later, following a transient ischaemic attack (mini-stroke). What startled her was not simple forgetfulness.

“The biggest red flag was her personality change,” she says.

Her mother, once active and social, began sleeping more, speaking less, and withdrawing from crowds. Doctors first diagnosed high blood pressure, then hearing problems, then depression. It took time, tests, and persistence for the family to find a name for what was happening. Such delays are not unusual.

A recent report by STRiDE on dementia in Kenya reveals that it is often diagnosed at advanced stages, reflecting delays in recognition and referral. Stigma and cultural beliefs further delay care.

Getting a diagnosis, however, is only the beginning of a far more gruelling journey and precisely where digital tools and AI platforms are beginning to position themselves.

Kabui and her family spent nearly two years navigating her mother’s confirmed dementia diagnosis alone. They doubted the diagnosis and sought second opinions until 2023, when an online search finally connected them with support.

Kabui and her family spent nearly two years navigating her mother’s confirmed dementia diagnosis alone. They doubted the diagnosis and sought second opinions until 2023, when an online search finally connected them with support.

“I just Googled dementia in Kenya and came across information and different phone numbers that I tried calling.”

Grace, the woman who answered, was kind and knowledgeable about dementia, and for the first time, she felt heard and understood. Grace introduced her to the Alzheimer’s Dementia Organisation Kenya (ADOK), where she has found her community.

Toll on Caregivers

Marilyn Kamuru, a writer-consultant and practising lawyer, became her mother’s sole caregiver after a formal diagnosis in 2024. She says there is no such thing as a typical day for a caregiver. Dementia care is not only medical. It is legal, financial, emotional, and logistical all at once.

“The caregiver steps in the space between the dementia patient and the world,” Kamuru says. “Suddenly, you are their chief advocate, whether it’s with doctors or with banks or with people who are trying to swindle them.”

Kamuru moved back from Diani to Nairobi to be closer to her mother and soon found herself managing everything from her mother’s businesses to hospital admissions. Eventually, she hired a caregiver. She describes the adrenaline of the caregiving phone call: the ring that instantly rearranges your nervous system.

“When that call comes, my heart starts racing because it could be anything,” she says. “And whatever it is, I’m the one who has to deal with it.”

It is not only the tasks but also the constant readiness that drains her. The way her mind stays switched on even when she is trying to write, work, or parent her own children.

“Honestly, without ADOK and without that support system, I would have gone crazy,” Kamuru says.

Her mother initially denied the diagnosis, insisting she was fine. Extended family members also resisted interventions Kamuru considered necessary to protect her widowed mother’s assets, including legal safeguards.

“I’d rather you perceive me as disrespectful, but I protect my mother,” she says, describing how caregiving can force a daughter to push against family expectations.

Gender is the subtext that became the plot. Kamuru is blunt about how the burden landed.

“For women, it is expected, right? So, I’m almost not given an option,” she says.

Her brother, she adds, is not involved in caregiving and faces little social penalty. For her, the expectation is constant, woven into relatives’ opinions, institutions’ assumptions, and the idea that women will absorb whatever a family cannot afford to outsource.

The result, she says, is invisible labour that remains heavy even when a family pays for help. Her experience reflects broader patterns. A 2025 report by the United Nations Development Programme (UNDP) indicates that women in Kenya spend 3.5 times more hours daily on caregiving and domestic duties more than men.

Hospitals reflect the same logic. In one of her visits, Kamuru was asked to provide a 24-hour bedside presence because the system is not designed to care for patients with cognitive decline.

“Dementia is a medical diagnosis. Why isn’t the hospital equipped to take care of a dementia patient?” she poses.

She recalls a night nurse laughing at her mother’s forgetfulness and the anger she felt, not only at the individual but also at the training gap.

“There are times when I’m having to explain to a nurse about sundowning, and I’m like, ‘This is not okay,'” she says.

Research echoes what caregivers keep repeating: the work is relentless, and knowledge gaps compound it. A qualitative study of caregivers across three Kenyan counties found that caring for a person living with dementia required significant mental and physical effort and highlighted a lack of knowledge and awareness of dementia among families, health professionals, and the public.

Caregivers do not only carry the labour of bathing, feeding, and supervision. They also carry the labour of educating everyone else, from relatives to nurses, often while grieving the slow changes in the person they love.

It is in this gap, between exhausted families and limited systems, that technology is beginning to enter the conversation.

Promise of AI

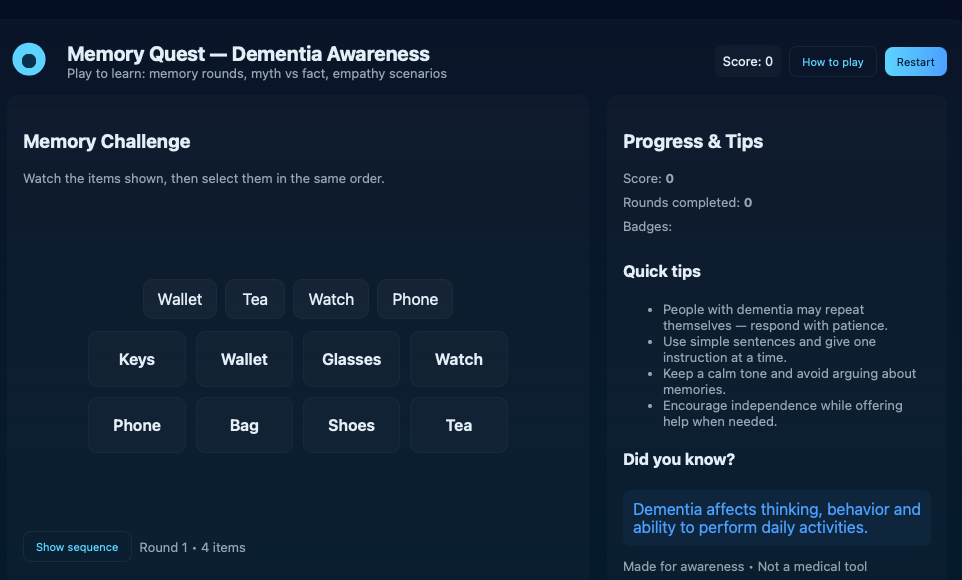

In 2023, a browser-based platform called Memory Quest/Dementia AI launched with games, myth-busting quizzes, and empathy scenarios. It does not claim to diagnose. It aims to teach families how dementia shows up in daily life and to start a conversation early.

Collins Mwangi, founder of the Dementia AI startup, says dementia is poorly understood. His own motivation came from watching what happens inside homes when memory and mood begin to shift. He describes the questions that pushed him into this work: “Why do you have to change diapers for this old man…and why do they keep forgetting?”

The most direct AI feature, Mwangi says, is a chatbot that is sometimes deployed during open campaign periods. A caregiver might use it to describe what they are seeing, ask about early signs, or check whether a behaviour change could be dementia or ordinary forgetfulness.

He argues that the primary benefits are its speed and wide accessibility. Since people are already accustomed to searching online, a tool that provides answers in simple language can quickly bridge the gap between initial concern and decisive action.

He argues that the primary benefits are its speed and wide accessibility. Since people are already accustomed to searching online, a tool that provides answers in simple language can quickly bridge the gap between initial concern and decisive action.

Kabui, the registered dietitian, says the platform felt close to her experience as a caregiver.

“The games felt relevant to my real life,” she says, remembering prompts about music and a scenario where a neighbour calls you by the wrong name. What she valued was not the idea of a digital fix, but the way good information can change a caregiver’s reflexes. She wonders what she might have noticed earlier if she had encountered such prompts before her mother’s diagnosis. For caregivers, these are not abstract puzzles. They are rehearsals for real conversations.

If AI tools become embedded in caregiving, the stakes extend beyond convenience. Families who can access digital literacy, smartphones, and stable internet may benefit from early information and support.

For those without access, the gap could widen further, locking them out of new forms of care. Developers, researchers, and health platforms stand to gain datasets and insights. Caregivers gain information, but not necessarily relief.

Policy Vacuum

These tools exist in a wider policy vacuum. Kenya’s health system has limited formal structures for long-term dementia care, leaving families to improvise. AI-enabled tools are expanding faster than the regulatory frameworks designed to protect users.

While Kenya has the Data Protection Act (2019), the Digital Health Act (2023), and the National AI Strategy (2025-2030), AI-specific guidance for emerging health tools remains limited in practice.

Caregivers often lack awareness regarding how their sensitive information including symptoms, routines, and delicate behavioural changes might be shared or utilized once recorded on a digital platform.

Some risks remain largely unspoken. Symptom-checking tools may misinterpret cultural behaviour or language. Chatbots can normalise surveillance inside homes under the banner of safety. Data collected from African caregivers could be extracted into global systems without accountability or benefit-sharing.

And algorithmic bias, especially in models trained on datasets that rarely represent African families may misread both disease and care. It is also unclear where accountability would fall if AI-generated guidance proves misleading for families seeking support.

A meaningful response would require coordinated action: health policy that recognises dementia as a long-term care priority, digital regulation that protects caregiver data, and economic frameworks that treat unpaid care as labour rather than an obligation.

Kamuru approaches the AI boom with caution. She wonders where caregivers’ information goes and who can access it.

“I am very concerned about the extraction of our data, our lived experiences, especially as African people, with a lack of attribution for our knowledge,” she says.

The question is, who owns caregiver experience once it becomes data? Stories shared in chatbots and symptom trackers form a body of knowledge with potential commercial and research value.

Founder Mwangi says his platform is intentionally light on personal data outside campaign periods for instance around World Alzheimer’s day, and that what the team sees day-to-day is limited to Google Analytics traffic counts rather than identities.

“We are only able to see analytics data,” he says. He adds that the educational games are not currently designed to record individual performance.

Where the platform does request personal details, he frames it as campaign-specific like updates on webinars, workshops, and follow-up with families seeking dementia-related support and says consent is explicit.

“If they click agree, then it means they’ve given us consent. If they don’t agree, they still have the opportunity to use the platform without any restrictions. Remember, our organisation, Memey AI, is not a profit-making organisation.”

In joint campaigns with partners like Women for Dementia Africa, some of the data from the platform’s users can be retrieved and shared with collaborators.

“For now, the data generated from the games on our platform isn’t a priority for us, because neither the organisation nor I are actively using it,” Mwangi says.

“However, the data can be accessed and shared with partners on joint projects. As long as there is a data source, that information can be retrieved when needed” he adds.

Risk of Extraction

Consent, in practice, is complicated. Caregivers seeking help may agree to data sharing without fully understanding how information might be used later, whether for partnerships, research, or future monetisation. Even when a platform is not currently commercial, the infrastructure it builds can carry long-term economic value.

The claim that data is “not being used now” offers limited reassurance. Digital health ecosystems evolve quickly, and datasets once considered incidental can become central assets. If caregiver insights eventually shape products, services, or research outputs, questions of attribution, benefit, and ownership become unavoidable.

Because caregiving labour is feminised, the risk is also gendered: women generate the experiential knowledge, while institutions and platforms retain the capacity to scale and potentially profit from it.

The economic value of unpaid caregiving which is estimated by the Kenya National Bureau of Statistics to be at Sh2.5 trillion in 2021 is rarely acknowledged in formal economic planning or supported through social protection policies.

The issue is no longer only that women carry the burden; it is that multiple systems, social, economic, medical and technological, depend on them doing so.

The issue is no longer only that women carry the burden; it is that multiple systems, social, economic, medical and technological, depend on them doing so.

Beyond the Screen

Kenya, like much of the world, is aging. Dementia cases are expected to rise, and the demand for care will expand faster than health systems can respond. In that future, technology will not arrive as an optional tool but it will shape how care is organised, delivered, and valued.

AI may streamline information and coordination, but it does not replace the human labour of feeding, bathing, reassuring, and advocating. Yet the heaviest parts of caregiving remain invisible to health systems and policymakers.

The risk is that automation reduces visibility rather than workload, making caregiving appear more manageable while the same women continue carrying it. Will emerging technologies shift responsibility toward institutions, policy, and public investment or will they reinforce the expectation that families, and especially women, absorb the difference?

Rosemary Kabui warns that most caregivers are too burnt out to get online, leaving her unsure of how AI helps in the long term. Information can reduce shame and isolation, but it cannot cook a meal or provide a moment of rest.

Marilyn Kamuru can read every resource available and still find herself explaining basic symptoms to an ignorant nurse. Awareness is a start, but it is not a substitute for a functioning health system.

If technology is to be an enabler, it will need to do more than provide information. It will need to connect caregivers to systems where the burden of care is shared beyond the household.

If developers want to build tools for caregivers, Kamuru says, they should start by listening deeply and speaking respectfully.

“If you’re going to try and design something for caregivers in Africa, talk to caregivers in Africa and ask them what they want and what bothers them,”she says.

If designed with caregivers, AI could strengthen early diagnosis, training, and support networks. If built without them, it may deepen inequality, extracting insight from homes while leaving the labour unchanged. The difference will depend not on the technology itself, but on whose voices shape it, whose data informs it, and whether governments treat care as infrastructure rather than a private duty.

In the end, caregivers measure a tool by what happens after the screen goes dark. Kabui can finish a digital quiz, then return to the kitchen, where breakfast still takes an hour. When AI enters the living room, it does more than assist care; it decides who is seen, who is supported, and who continues to carry the quiet burden.

This article was produced as part of the Gender+AI Reporting Fellowship, with support from the Africa Women’s Journalism Project (AWJP) in partnership with DW Akademie. The journalist used AI tools as research aids to review and summarise relevant policy and research documents and extract key statistics. All interviews, analysis, editorial decisions and final wording were done by the reporter, in line with Talk Africa’s editorial standards.